-

2 Votes6 Posts2k Views

-

4 Votes13 Posts185 Views

-

2 Votes8 Posts148 Views

-

1 Votes10 Posts326 Views

-

5 Votes21 Posts2k Views

-

2 Votes7 Posts357 Views

-

2 Votes22 Posts2k Views

-

1 Votes4 Posts439 Views

-

3 Votes5 Posts639 Views

-

0 Votes2 Posts1k Views

-

3 Votes5 Posts1k Views

-

2 Votes1 Posts536 Views

-

2

1 Votes10 Posts1k Views

2

1 Votes10 Posts1k Views -

3 Votes2 Posts690 Views

-

2 Votes3 Posts1k Views

-

4 Votes6 Posts2k Views

-

1 Votes11 Posts3k Views

-

1 Votes5 Posts2k Views

-

0 Votes1 Posts1 Views

-

4 Votes217 Posts195k Views

Recent Posts

-

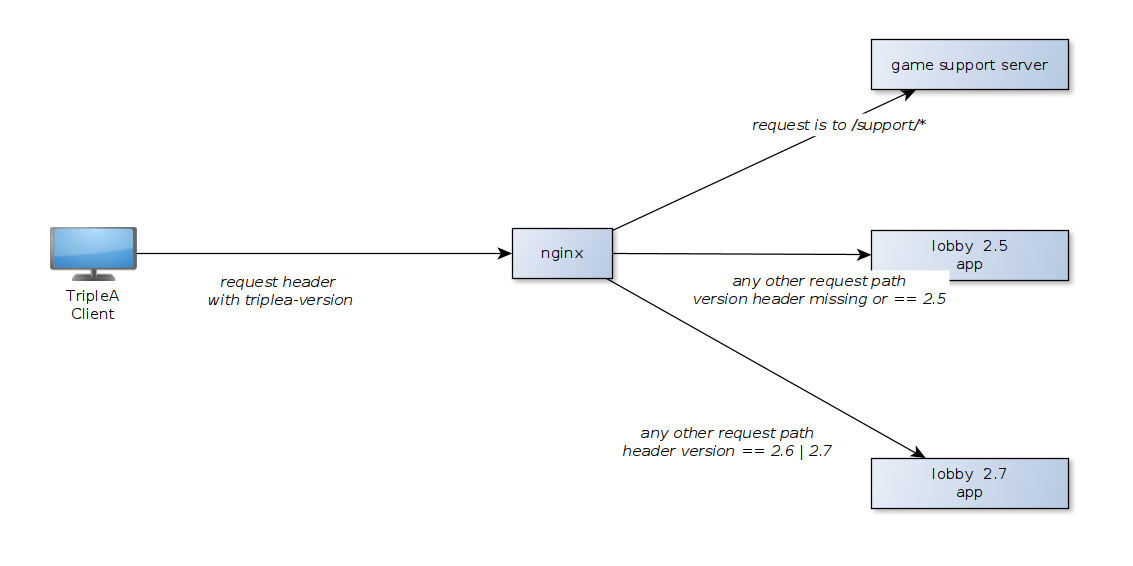

@LaFayette Why is there chosen for this release strategy? What are the pros and cons? In other programs, there is a release strategy I like that notifies you every now and then of an update (like every 1-2 weeks). Then, as the user, you have the option to install the update, ignore it for now, or ignore it completely and not be reminded of it anymore.

I think there are definitely people who would like to have the latest features and fixes, and if they get only notified like once every three or six months, they would have to go to the website themselves to see if there is a later version, somehow find out what has changed, and decide if it's worth updating.

It would be better I think to have definitely not less than 4 releases a year. Probably more like 6 or 12 (simply every month). If we would do monthly releases, in an ideal world, players would get a pop-up the next time they start TripleA after a release, stating that there is a new recommended release, along with an overview of what has changed. Then, players have the option to install it (also ideally, let the program automatically uninstall the current version and install the new one instead of having to do that yourself), or to ignore the update for now and be reminded on the next start-up, or to ignore it completely and only be reminded once the next recommended release is out.

There might be an argument against monthly releases that the program might not see enough updates in that time period. As I have started contributing only recently, I might have jumped in at a time that happened to have a lot of activity, but other than that I cannot imagine that in an entire month, nothing of importance has been done to the program. There is always some kind of improvement that would be nice to have for the user, regardless of how small it is. And once in a month is long enough that it wouldn't start feeling intrusive to a user, something that could happen with weekly releases. You don't want to bombard them with updates as that can become annoying over time. Twice a year sounds like way too little. A lot can happen during that time and might introduce too many changes and updates at once.

Another argument would be that there wouldn't be enough new downloads that would prevent the TripleA installer from being flagged by Windows Defender when running. I can concur with that, but I think the majority of the downloads would come from users updating, not so much from new users downloading the program. So increasing the time period might not help as much as it might seem. But we would have to do some experimentation on that.

Given everything, I would suggest simply starting with quarterly releases, that sounds like a good start, and then observe what the general feedback is from the users, and also keep an eye on the number of downloads.

-

Would pre-release versions be available with any change? I am always using the pre-release.

-

On the other hand, to minimize the risk of burn-out in doing such a thing (which I guess is easily done but very unengaging especially in case of problems emerging right after the community at large gets to "test"), the best choice would be doing it only once per year and exactly in the time of the year (whatever it is) where developers tend to have more free time and more willingness of using their free time to manage TripleA. Add to that that, if one thing happens once a year, it is much easier for a person immediately to remember "it's TripleA release time", so it can become more easily part of the tribal knowledge of the community (meaning that people will be more likely to accept problems as part of an expected process rather than feeling like the developers are randomly thowing lemons at them) if the process becomes consolidated.

-

So, if we do twice a year, that would be be a Nov 1st recommended release and then a May 1st recommended release. That is probably a good place as any to start.

As a non-developer, I would go with whenever tends to be the period when developers have more free time (asking the other main developers).

(I think more people being online during releases can be argued either way about being a good or bad thing.)

I would actually go with quarterly "releases", so, when one gets skipped, it becomes a 6 months wait rather than an 1 year wait (and 9 months instead of 1.5 years if two get skipped).